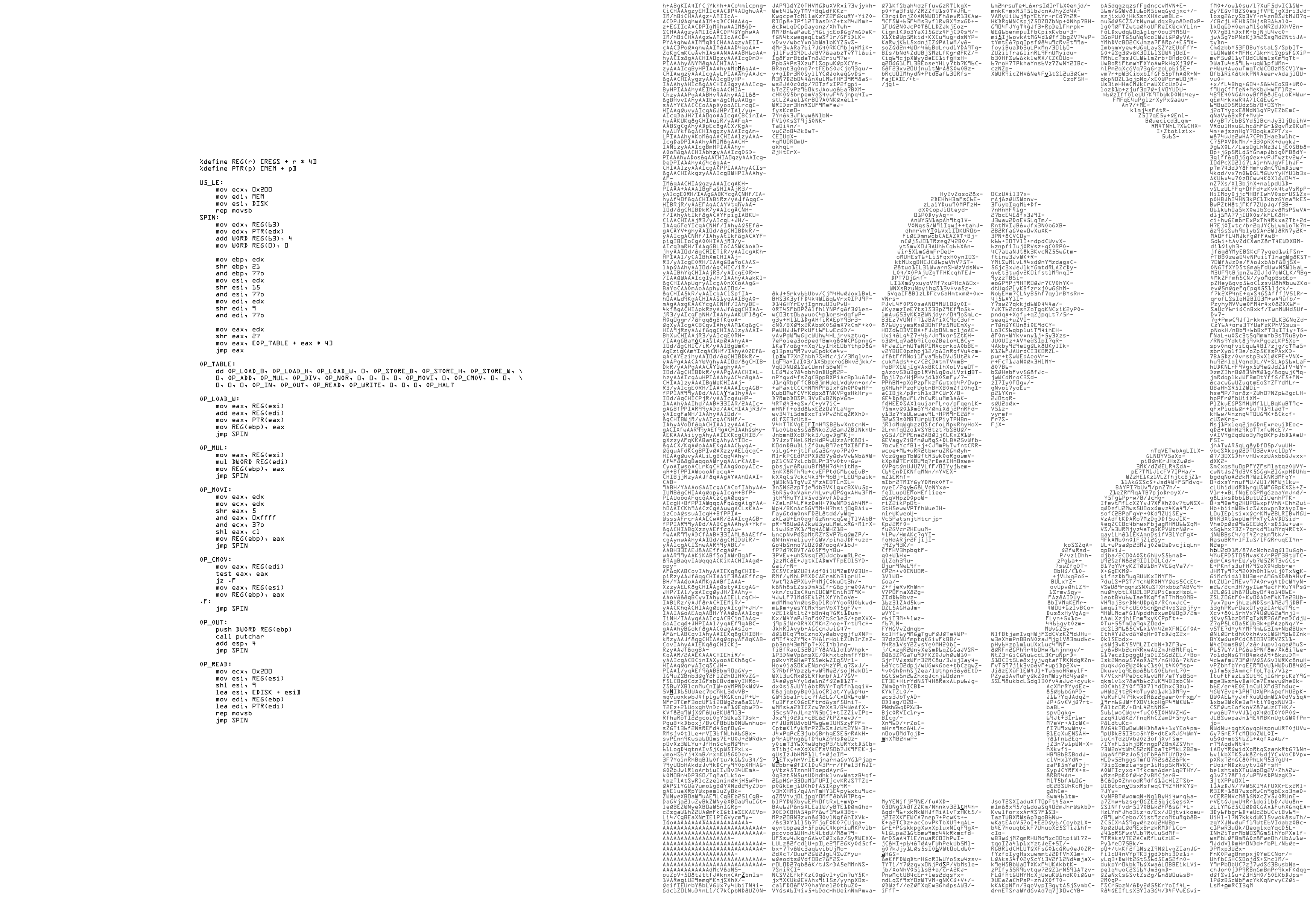

It looks like a CrackMe, or capture the flag exercise. The x86 assembly is clearly a virtual machine, and I assumed the block of text on the right would be a binary that runs on that virtual machine. I call the machine, for lack of a better name, Dan32, because as I later found out, it is a 32-bit virtual machine, and originates from Denmark.

The block of text on the right is base64 encoded, which is easy enough to to convert back into a binary file, but since it is an image, we can’t directly get at that block in a text format without doing some kind of optical character recognition. We can guess it is base64 encoded by the characters used, and really after you’ve seen a lot of base64, you can usually spot it pretty easily.

I tried a few online OCR services, which did not work, and since I had invested almost no time into this, I was ready to say the hell with it. I was not about to type all that base64 text into my text editor by hand.

I did end up solving this puzzle and creating tools to reverse engineer it, what follows is a detailed writeup, read on for more.

Note: If you are using a blocker such as Privacy Badger, like I do, I’ve noticed the terminal movie playback embeds from asciinema.org may be blocked by default. If you wish to see those in this post, you’ll have to toggle that domain to allow in your plugin, though you don’t need to accept cookies from that domain for it to work.

Getting the Base64 Text

Staring at it a bit longer, we notice certain characters in the base64 side are bolded, and if we go through and write down each bolded character,

it spells out some nonsense MzJoYWNrZXI1NTd6amt6aS5vbmlvbgo..

I thought, since we are looking at a massive bunch of base64, that maybe this was also base64 encoded. We can use a tool called rax2 which

is a part of Radare2 in order to decode it like this:

1 2 | |

It’s a vanity .onion address on the TOR network. The site, which unfortunately is not online anymore, has downloads for both the assembly listing, and the base64,

saving us from needing to worry about how to get those characters into our computer by hand.

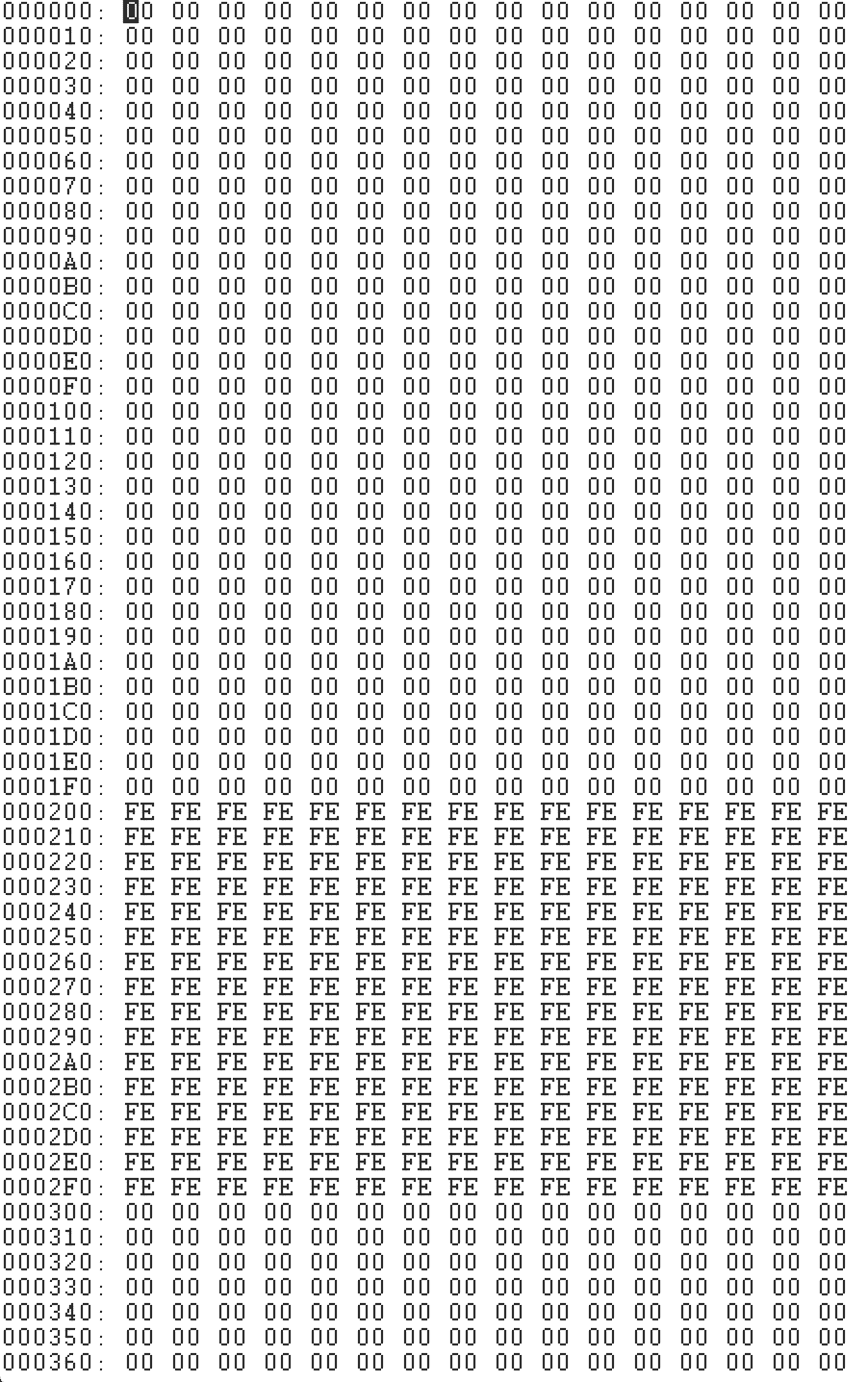

My approach to these sorts of files, that might be malicious or not, is usually to use hexdump or a hex editor program to look at them

before going any further. After doing this to the base64 file, I notice that it is full of ANSI terminal escape sequences, and that these

ones are for positioning text at (x,y) coordinates, setting bolding etc. This is because if you were to cat the file to your terminal,

it would reproduce the formatting seen in the image, with the question mark and all, which is pretty cute, and are actually required to

put the text in the right order to be decoded.

Before I cat this to my terminal, I wrote a script to check each of the ANSI escape sequences to make sure they were only positional

and style commands, and nothing weird or malicious. They turned out alright, so I printed it to my terminal and copy pasted the

text into a file. Then I wrote another script to remove the end of line hyphens, join it all together, and base64 decode it, resulting

in a binary file that I named disk.img

You can find the complete source code for all of the Radare2 plugins I wrote to solve this on my github.

The Virtual Machine

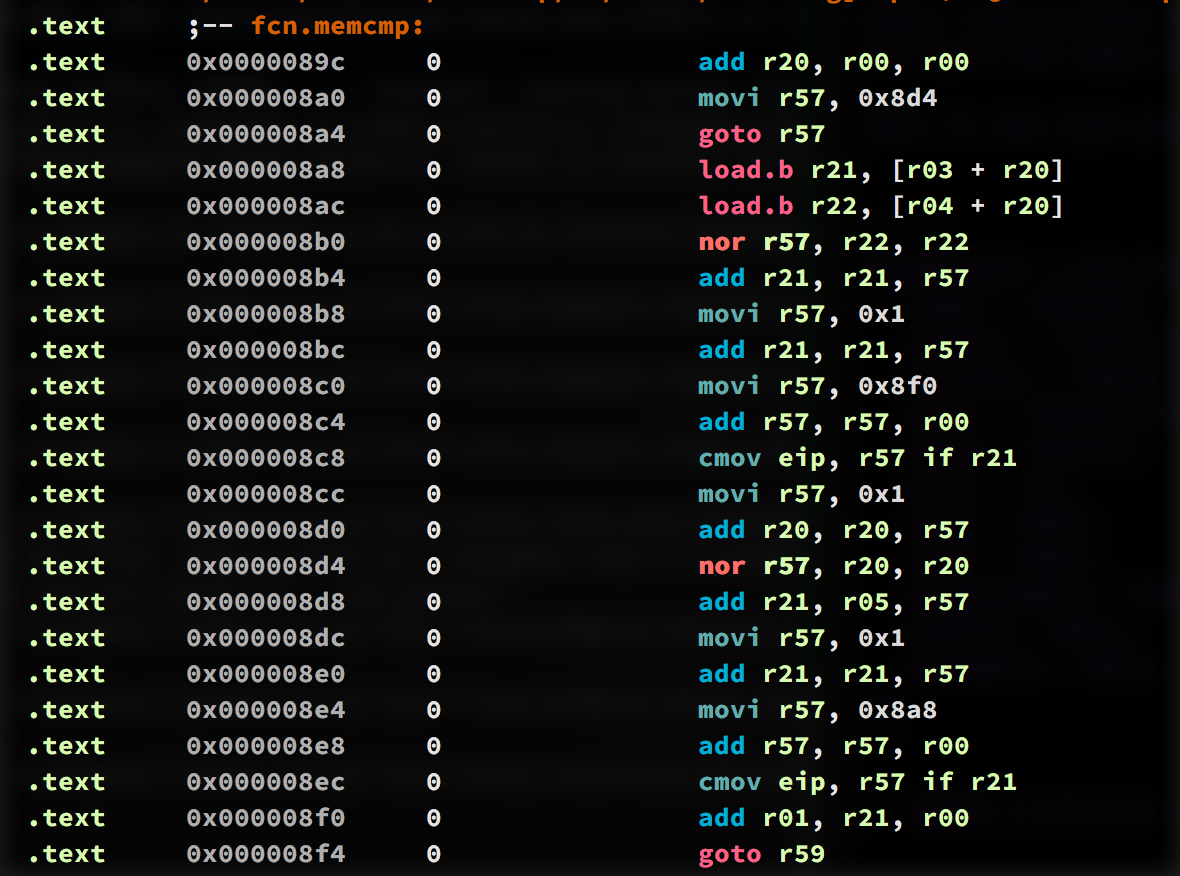

The provided x86 assembly for the virtual machine is bare bones, but it tells us everything we need to know to run this binary.

The label OP_TABLE points to an enumeration of each opcode the VM supports, and the order, so we know the numeric value of the that op.

Some more information we learn from the given asm is

- There are at least 64 registers in this machine

- It must be a 32-bit machine

%define REG(r) [REGS + r * 4]Registers are 32-bits wide%define PTR(p) [MEM + p]It requires some read/write memory spacelea esi [DISK + esi]It requires some read/write space to act as a diskmov eax, [OP_TABLE + eax * 4]Every opcode is 4 bytes widecmovis the only way to do conditionals

Even after learning all that information, it’s incomplete, some of the opcodes are not given implementations, such as write,

in, div, and the various sized load.x, store.x, and nor, to name a few. So we’ll need to look at what’s given,

and implement those ourselves.

My Philosophy of Reversing

Here’s where a major part of my reverse engineering philosophy comes in, I don’t as a rule like to run random binaries given to me,

especially in malware/crackme situations. If I take the VM’s assembly listing, complete the missing implementations, and run the

mystery binary disk.img, I have literally no idea what it is capable of at this point. Worst case scenario is that binary knows

about flaw in the given virtual machine, and exploits it for a VM escape onto my host system and starts doing shit.

I’m heavy on the static analysis side, but at this point I don’t have any debugger, or analysis tools that even understand this made up computer architecture. What I want to do, is use Radare2 to reverse engineer the binary, so I’m going to need to teach Radare2 about this file format, computer architecture, invent a textural assembly language, and so on. And that’s the real fun of this challenge for me, honestly, so that’s what I did. Radare2 allows you to write plugins to extend it, so it can understand any CPU, real or imagined, and simulate its running through ESIL (Evaluable Strings Intermediate Language).

Radare2 Plugins

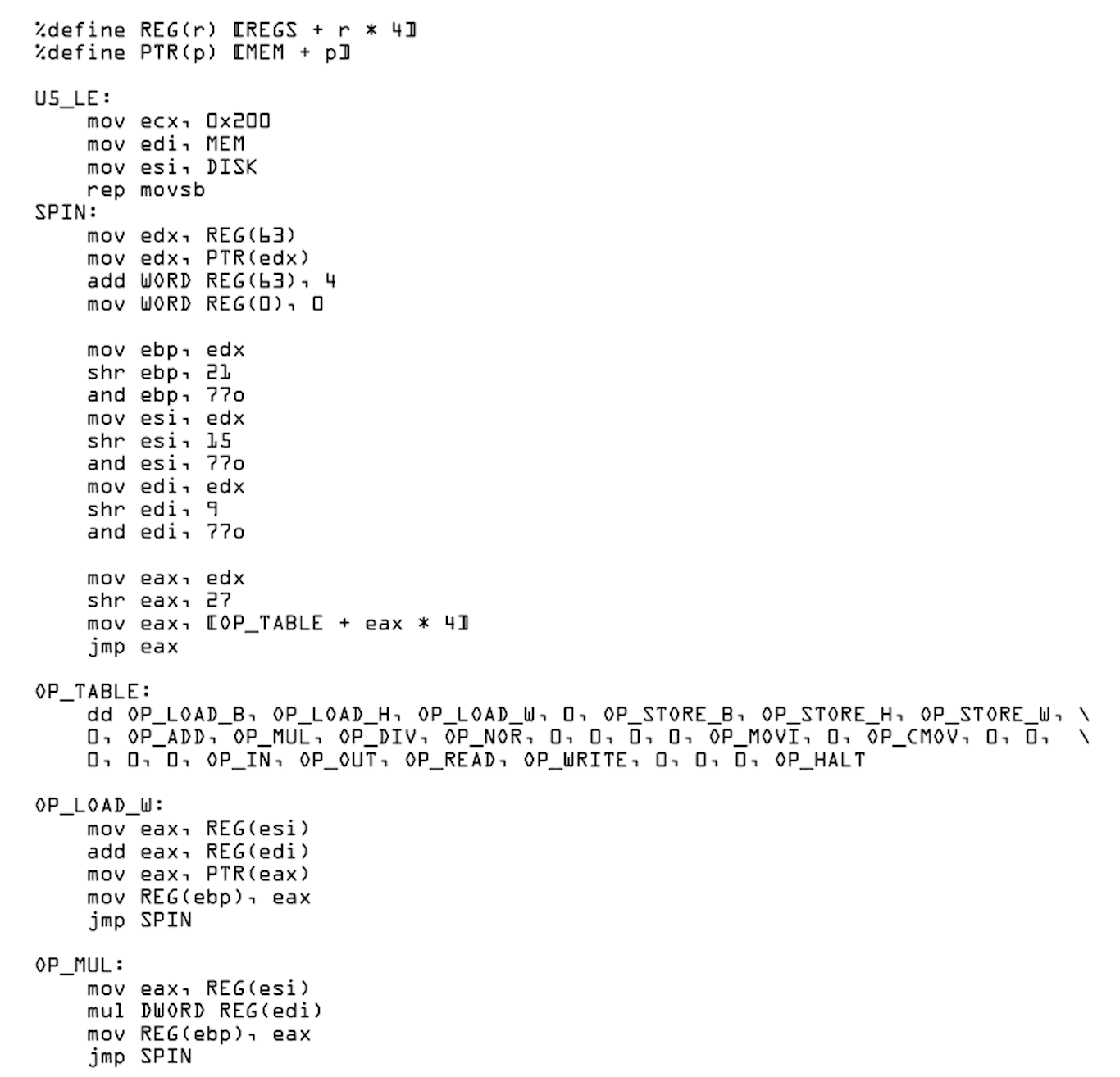

The first Radare2 plugin to write, is the asm plugin. This plugin takes the 32-bit machine level opcodes and fills in a structure with information about that opcode, its arguments, and it provides a textual representation for viewing a disassembly listing.

In order to do this, we’ll write a plugin in C. The asm plugin’s main function has the following prototype

1

| |

The parameters to disassemble are:

RAsm *ais the current assembler contextRAsmOp *opis the structure we need to fill inut8 *bufare the opcode bytes we are disassemblingut64 lenis the length ofbuf

The important fields of RAsmOp to fill in here, are buf_asm which holds the textual representation of the disassembled opcode,

and size, the size of the opcode.

Looking at the provided x86 assembly code, we can see how to dismantle a 32-bit opcode into its constituent parts, remember all opcodes are 4 bytes long or 32-bits.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 | |

Becomes

1 2 3 4 5 6 7 8 9 10 11 12 | |

Next, for convenience, we make a lookup table that maps 0 to 63 to the corresponding register name. I happen to know from

the future, that r62 is the stack pointer, and r63 is the instruction pointer, but I didn’t know this at the time.

It makes reading the disassembly a lot easier though once we know this.

1 2 3 4 5 6 7 8 9 10 11 12 | |

Since in the disassembly output we’re going to be referencing things by register name a lot, I grab the textual names for each argument as well.

1 2 3 | |

What follows in the disassemble function is a switch statement on op_index, where we just need to fill in the

op size and the textual representation of the opcode itself. So I’ll show a few of those here, you can see the

full source of these plugins here

1 2 3 4 | |

So for example the nor instruction, which wasn’t provided in the image, just uses snprintf to write out our

human readable disassembly, and sets the op->size = 4. This ends up producing something like nor r21, r57, r57.

Quickly taking a look at another example, movi is the move immediate value instruction, and looks like this:

1 2 3 4 5 6 7 8 9 10 11 | |

Notice op->size = 4 for all instructions, and setting op->size = -1 indicates an invalid operation. The above movi instruction actually

encodes an immediate value directly into the opcode itself. This is the only instruction which does this, all other instructions

must move values into a register to operate on them. Again, this is a straight translation from from the given x86 asm.

Other instructions had to be put together just following the pattern that was set out for us. For example, div, mul, nor

all work the same as the given mul opcode. All said, it is not a lot of work to get a fully functioning disassembler going in Radare2.

Here is the last part of the plugin, where we hook our code up by setting callbacks, and some information:

1 2 3 4 5 6 7 8 9 10 11 12 13 | |

And here’s the result, a nice looking assembly readout that we can use to start reversing the binary.

Radare Analysis Plugin

With the above plugin we can now see human readable disassembly of the binary, but Radare doesn’t have enough information about this architecture yet to allow us to step through the program and simulate it like you would in a debugger. And you can’t yet perform static analysis like you would get with IDA. Radare supports about one zillion architectures already, but since this CPU was probably invented just for this challenge, we’ll have to add support ourselves.

Radare’s answer to this is ESIL, (Evaluable Strings Intermediate Language), providing a register profile for the CPU, and using those to create an analysis plugin. An analysis plugin expects us to implement a function like this, to set the register profile.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 | |

Here we specify all 64 general purpose registers in the machine, and also give aliases to registers that have special meaning.

The format is gpr <registername> .<size in bits> <offset>.

With this we can create a register file containing registers of various sizes, which can overlap. For example in x86, we can specify

register gpr ax .16 0, but also specify the high and low bytes as gpr ah .8 8 and gpr al .8 0.

Dan32 doesn’t have overlapping registers, or high and low register access by name, so we don’t need to do this.

Some register aliases are A0, A1, A2, which are for arguments that are passed to functions via register, which is pretty common in this binary. LR is the link register, which like on an ARM CPU holds the return address of a function, PC, is the instruction pointer, and SP is the stack pointer, so I’ve filled those in after having gotten some experience with the binary’s two calling conventions.

The next task for the analysis plugin is to create ESIL for each and every instruction supported by the CPU. There are not many instructions so this didn’t take very long.

The plugin must implement an analysis function with the following prototype, which looks extremely similar to the asm plugin function:

1

| |

Here, we’re asked to fill in more information about the opcode in the given RAnalOp *op parameter, it looks something like this:

1 2 3 4 5 6 7 8 9 10 11 12 13 | |

All of these are pretty important for proper analysis, but the most important, so that we can simulate this binary inside

radare2, without running it on the untrusted VM we were given, is the ESIL. Here is an example of ESIL for movi, the

move immediate value instruction:

1 2 3 4 5 | |

ESIL is a stack machine, turing complete, so it is able to represent the instructions for any CPU, it is like a ridiculous

sort of microcode almost. A more complicated instruction cmov, the conditional move instruction, looks like this:

1 2 3 4 5 | |

So after each instruction is codified by type and given an ESIL representation, we’re done. If you are interested in how ESIL works, here’s the docs. I’ve written some pretty crazy ESIL for the disk sector read/write code, and stack machines are not my favourite, but they work :) Here is some of the longest ESIL I wrote for one opcode. It reads 512 bytes from a numbered disk sector, into a given memory address.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 | |

Disassembling the binary in Radare2

Ok, so now we’re all set to get a disassembly view of this binary, we’ll just load it up in Radare2, hit play to see how it goes.

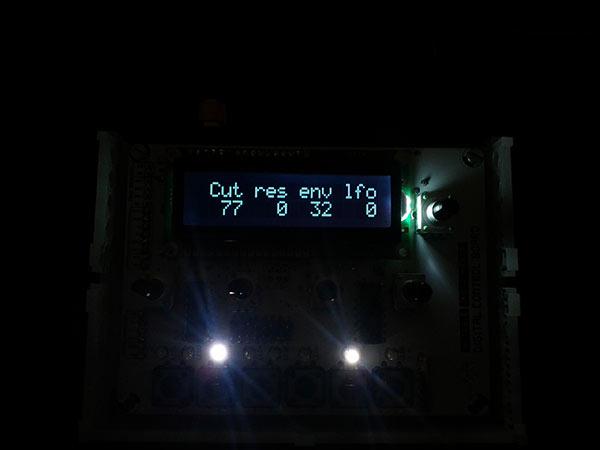

“Wrong Endianness”. Now, there are a few things going on here, so let’s look at the first instruction: movi r00, 0x78200.

I don’t want to get to bogged down in the details, but I know from the future, that register r00 is like the zero register

on a MIPS system, it always contains the value 0, and so here writing 0x78200 to that register, is effectively a no-op,

and we’ll see why that’s done in the next part.

Next up we have movi eip, 0x14. There are no jump instructions in this opcode set, and unlike x86, you can write to the

instruction pointer register to get a jump. Interestingly, jumping to 0x14 is not a multiple of 4, and so we’re jumping out of alignment,

which is why we’re seeing the disassembler isn’t able to interpret a few instructions after that at first.

When we get to the address 0x14, we end up at series of instructions that loads immediates, and then uses out to print them

out to the display.

1 2 3 4 5 6 7 8 9 10 11 | |

A little bit of radare knowledge, the immediate values were displayed as hex to begin with, so I wrote a little radare expression

to hint to it that those immediates are actually string or char values using the ahi command, which stands for “analyse hint immediate”.

Radare2 is terse as hell, and you get very used to it, and probably, maybe, start loving it. The expression below basically creates

a range from the current address, denoted as $$, to $$ + 17 * 8 with a step of 8 bytes. The @@= functions as an iterator, which runs

the command ahi s on each address in the range, telling radare the immediate values are character values.

1

| |

Anyway, the real problem is we’re interpreting the binary as little endian, when it’s actually big endian. So we can just go back to our plugin and fix that pretty simply in the disassemble function by reversing the bytes, and setting the endian properly.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 | |

Correct Endian

Back to the entrypoint of our binary, what was once a no-op, when read backwards, jumps us past all the “Wrong Endian” stuff, and begins displaying the binary properly so we can reverse it.

The opcode 87e00180 when read in big endian jumps us with movi eip, 0xc, and another jump movi eip, 0xa8, bringing us

finally to some actual code.

1 2 3 4 5 6 7 | |

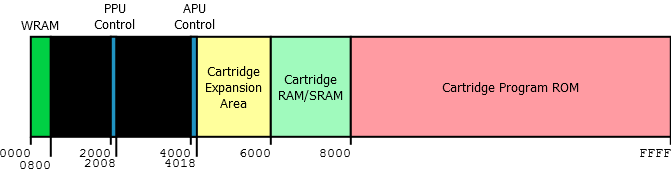

This is the first in a series of tricks and tests that the binary performs on the virtual machine itself to make sure it is implemented properly. With the use of a bin plugin for dan32, which I’m not going to bore you with here but is available on GitHub with the rest of the code, I’ve tried to setup a memory layout that would be familiar for someone like myself who works with ELF or PE files. Here is that layout.

1 2 3 4 5 6 7 | |

Remembering the DISK address that was mentioned in the x86 assembly VM, which is meant to represent a readable writeable

disk area from which the program is loaded. This area stores disk.img in the .diskrom section at address 0x200000. I probably

shouldn’t have called it a diskrom, since you can write to it, but I didn’t know it was going to be written to at the time, so

it’s too late now. I actually believed it was going to be something like a game cartridge rom at first, but oh well.

The code is executed from the .text section, with entrypoint 0x0, and we have a .bss section which contains some initialized

data that is used in the program. The read and write instructions are used to copy data from the disk into memory by 0x200 byte

sectors.

So the trick here, is that at 0xb4 we are writing from r00, which always contains zero to address 0xbe, which is altering

an instruction. Then, if your read instruction works properly this is immediately corrected by reloading the entire first

sector from .diskrom back into memory, undoing the damage. If your read instruction is not working, you will be greeted by

the text “Disk read error!” and the program will halt.

Notice how the analysis plugin is working, showing beautiful ascii arrows that point to the destinations of our jumps. When

the zero is written to address 0xbe, it modifies the instruction, and we see the control flow is taking us directly towards

“Disk read error” and a halt. The read immediately fixes this and the control flow updates.

The Next Test

Next we move on to 0x14c, which is an area of the binary that sets up the stack pointer, and reads the rest

of the program from .diskrom one sector at a time.

I guess here we can get our first look at how dan32 goes about things.

1 2 3 4 5 6 7 8 | |

Here’s some things to take note of right off the bat:

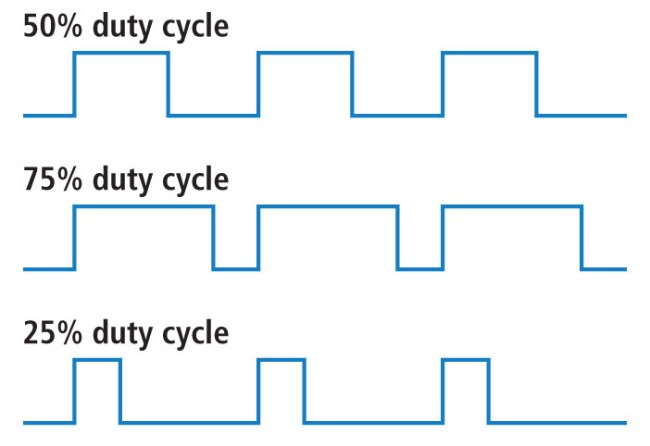

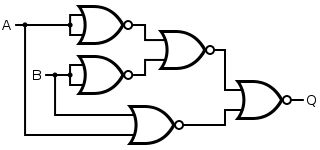

- There is no subtraction instruction

- There is no concept of signed numbers

- We can still have negative numbers using 2’s complement

- There are no other bitwise logical operations besides NOR

- NOR is universal, and can construct any other gate

- There is no direct way to move one register into another one, so addition + 0 is used

nor(a, a)flips all the bits ofanor(a, a)is equivalent to-(a + 1)in 2’s complementr00is a hardware zero registerr57is used as a temporary register

There’s a few patterns we see using NOR throughout this binary. Above we want to save the instruction pointer to r61, and

then subtract 4 from it. This is done many times in this binary like this:

1 2 3 4 5 6 | |

This is a roundabout way of just saying r61 = eip - 4, but that’s what we’re dealing with here :)

The next test the binary performs on the virtual machine, is to test the div instruction. Since

this instruction was not provided in the x86 assembly code, it is to ensure we’ve got the argument

order right, and we’re not allowing division by zero. If we’ve done it wrong, we’re sent off to

some code that prints “ALU Malfunction (DIV)” and halts the program.

By the way, these symbols such as fcn.alu_malfunction_div, fcn.main, and so on, were added by me

while reversing the binary to make it more clear what is going on.

1 2 3 4 5 6 7 | |

That’s also our first look at conditionals in dan32. There are no compare instructions, there is

no zero flag, and no conditional jumps like jne, as you find in other instruction sets.

An interesting side note I guess, is that there is no real compare instruction in x86 either, the

cmp instruction on that processor is actually an alias for subtracting the two values. When

they are equal, since a - a = 0, this sets the zero flag, which is what instructions like jne

are conditional on.

The Main Function

Now that the Radare plugins are working, let’s have a look around the binary, simulate it a bit, and look around. This is loading a project file where I’ve already reversed the entire binary, but gives a good idea of how it’s working.

Here’s where we start getting some idea of what this binary is up to, and finally get to see some proper

functions such as print(), scan(), memcmp() and things like that implemented.

1 2 3 4 5 6 | |

First up, radare has identified a string “Password: “, and helpfully renamed its address as the symbol

str.password for us. Here we can see one of the two calling conventions in action. This one is a

lot like fastcall, where we load the first few arguments of a function into registers r03, r04, r05,

and end up with our return value in r01.

Remember I identified r59 as the link register, and that is used as our return value. So here, the

calling convention is to calculate the return address, eip + 8, two instructions away, and store it

into r59, we then load the address of the print function fcn.print into a temp register, and jump

there.

Throughout the binary, r57 is always used as a temporary register. There are others such as r20, r21

which are always used as counters or array indices. In fact, this assembly code is so consistent in the

way it does things and which registers it uses, that I wonder if it was emitted by a machine, or written by

hand by someone who is just awesome.

Now that we know our arguments, our return address, and where we’re going, that about fully describes this calling convention. There is also a stack based calling convention like you would find on x86 32-bit, which I may write about later.

The Print Function

So don’t worry I’m not going to bore you to death by literally explaining every function, but this print one is a fairly simple example to start with.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 | |

This function is simple, but normally to reverse a difficult function, I will slowly replace elements of the disassembly with C, until I have a C function. In this case, we’d have a for loop:

1 2 3 4 5 | |

The Purpose of the Program

I’ve kept from mentioning the actual purpose of this program for way too much of this article. If written in C, the main function would just about look like the following code. This was figured out by reversing each function in turn, and I got a happy surprise at the end, we’re going to be dealing with encryption.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 | |

So what’s going on here, is we’re going to do an in-place decryption of the DISK section if we’ve

entered the right passphrase.

We’re able to figure all this out, without running this binary at all, through static analysis and a bit of ESIL simulation. I guess the question is how did I know which function was doing the decryption, what actual encryption algorithm was being used, and how I’m going to figure out the passphrase without even running the binary.

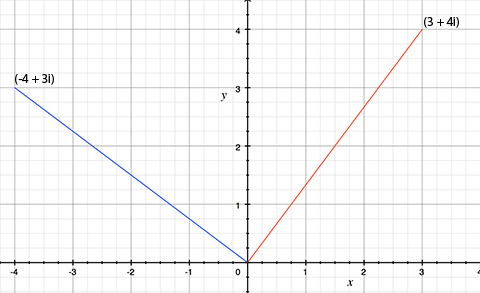

The answer to the first question is that I knew I would probably need to find XOR somewhere

in this program which would XOR the ciphertext with the key stream, but since we have no XOR

instruction, I knew it would need to be created with a group of NOR, which I found pretty easily.

So I spotted that pretty easily, and pinpointed the main decryption routine.

1 2 3 4 5 | |

The answer to how did I know which encryption algorithm it was, is more funny. I didn’t know which one it was. I had stepped through the key scheduling function a few times, after it prints “Initializing Encryption”, and thought that it was basically key stretching the passphrase. It was only later when I was randomly reading through a writeup on some malware which used RC4 encryption, that I realized what I was looking at the same RC4 key scheduling algorithm.

Cracking the Passphrase

The main encrypted part of the binary was identified by the address that was being passed to the

decrypt() function, which was 0xc00. I also previously noticed this while running the binary

through the entropy function of the program binwalk. Here is the output from binwalk -E

1 2 3 4 5 6 | |

One of the weaknesses of some encryption schemes, is in how it checks if the passphrase is valid before decryption. Say for example you enter a passphrase on a zip file or something, and the unzip program just blindly decrypts the file without knowing if the password is valid. It’s going to produce total garbage if the passphrase is wrong, and the program won’t have any way of letting you know that you’ve entered the wrong password.

So a common, bad, way to verify the password first, is to have some known ciphertext, plaintext pair that is encrypted using the passphrase right in the binary. You enter the passphrase, it decrypts this small ciphertext, compares it to the known plaintext, and if it’s correct, it says “yay” and moves on to decrypting the rest of the file. If it’s wrong, it says “boo”, and doesn’t decrypt the file into garbage.

This is what’s happening in our dan32 binary. The known ciphertext, plaintext pair is included, meaning we just have to crack that.

Here we can see that before decrypting the DISK section, it tries to decrypt

a small 56 byte buffer, and then compares that to a valid string that is included in the program.

1 2 3 4 5 6 | |

Here we can output the short encrypted buffer, and its valid decryption. If the passphrase we give

doesn’t decrypt this short buffer correctly, the program will halt. Here I use the px Radare command

to do a hexdump of the test ciphertext, and another ps command to print the plaintext string.

1 2 3 4 5 6 7 8 9 10 11 | |

That means in order to crack this passphrase, I only need to figure out how to successfully decrypt this 56 byte buffer, which is something I can do entirely outside of this binary. I decided to reverse the key scheduling and decryption routine, and rewrite it in C so that I could brute force the password quickly outside of this environment, and outside of Radare.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 | |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 | |

And finally the decrypt routine.

1 2 3 4 5 6 7 8 | |

I made this into a complete program, that takes a passphrase as argument, and then wrapped it in

a short Ruby script that repeatedly tries passwords from a list I have, until the decrypted result matches.

This only took about 3 minutes, the passphrase ended up being agent This was lucky, because it could

have been a lot harder if it wasn’t a simple word, I would have needed to use hashcat or something a

bit more sophisticated. There are also flaws with RC4 itself, which directly relate to the problems

with WEP, but I didn’t need to go that route.

I can’t really call writing this code a waste of time, since I ended up needing to do it in order to

actually identify the algorithm, but there are far easier ways to decrypt RC4 that I could have used,

for example, Radare2 comes with a program called rahash2 which can, among about a zillion other things,

be used to decrypt RC4.

1 2 3 4 5 6 7 8 9 10 11 | |

Decrypting the Disk Image

At this point I am thinking I will just dump the high entropy section of the binary from 0xc00 onwards

out to a separate file, and decrypt it with rahash2 and be done with it, but when I try this, I end

up with unintelligible garbage, that isn’t proper dan32 code, and isn’t anything else I can

recognize.

The DISK section is divided into 512 byte sectors, and it turns out they are not decrypted in the order they

appear in the file. The order of the pseudorandomly generated keystream matters since it’s a stream cipher,

and so that is why I’m getting garbage out. I decided then to just simulate the decryption process within Radare

using ESIL, since I put so much work into properly defining each opcode in ESIL, it does simulate the VM perfectly.

The only problem is, that I have not implemented the in and out opcodes for doing IO, so I would be

running the program blind, and be unable to enter the passphrase or see printed output.

Fixing the IO Problem

An easy way to avoid writing the in opcode, is for me to simulate the program up until it is about to

ask me for a passphrase, stop there, and just write the passphrase into memory at the right address, and

skip over the scan() function entirely and continue afterwards, so that’s what I’ve decided to do.

For the out opcode, there is a less hacky solution. I can use ESIL to simulate an interrupt, and attach

that interrupt to an external program that will receive the character value to be printed. I wrote a short

Ruby script which accepts an argument and prints it to the standard output. And inside Radare2 simulate the

binary like this:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 | |

The Result

This takes a while to complete, so I just went off and did something else. Another thing, not shown here is that the binary often calls functions that just do nothing but waste enormous amounts of time counting, which have no effect on the output. I patched these calls out of the binary so I wouldn’t have to wait 2000 years for it to finish.

Once all is said and done, the binary has completely rewritten the DISK section into yet another binary, and we’re

given this message:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 | |

I dump the DISK segment into an actual file, and reload that in Radare, and sure enough it has decrypted itself into

a webserver written in dan32. I reversed this new binary for a while, and found it contained:

- Embedded html files

- A GIF of Morpheus from the Matrix

- A PNG background file

- Some lzma compressed issues of Phrack Magazine

- The Hacker’s Manifesto, by the Mentor

- Some zlib compressed files

- And the finally, the flag, written in Danish

I used binwalk to extract these from the binary and looked through the contents. I got the flag, so I’m calling this one done. Good experience overall, 10/10 would crack again. I’m so proficient in reading dan32 assembly now, that it’s a shame I’ll probably never have any use for it again, it’s a pretty nice VM.

]]>